AutoOps for Elastic Cluster

Elastic has announced on Feb 25 that Elastic AutoOps is now free for all.

https://www.elastic.co/blog/autoops-free

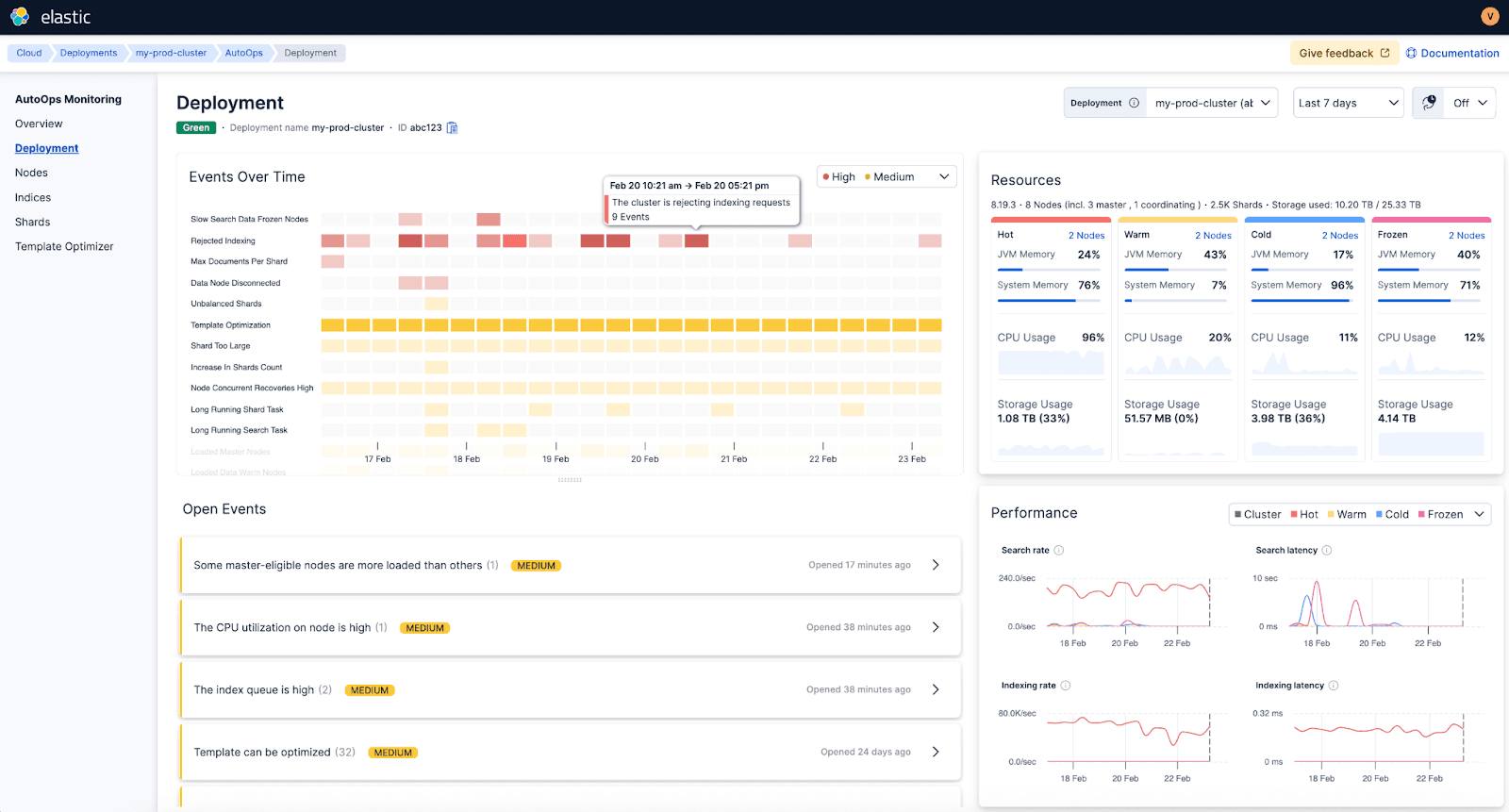

Elastic AutoOps is a SaaS service Elastic provides that helps you to gain critical insight for your cluster operation. It collects the operational metadata (Node stats, cluster settings and shard states etc) and ship to AutoOps Service on Elastic Cloud for analytics and operational dashboards.

What it means for Elastic users and administrator teams?

Elastic has announced on Feb 25 that Elastic AutoOps is now free for all.

https://www.elastic.co/search-labs/blog/elastic-autoops-free-for-self-managed-elasticsearch

Elastic AutoOps is a SaaS service Elastic provides that helps you to gain critical insight for your cluster operation. It collects the operational metadata (Node stats, cluster settings and shard states etc) and ship to AutoOps Service on Elastic Cloud for analytics and operational dashboards.

This means that Elastic Cloud provides a free cloud service to monitor your cluster. (It does not collect your payload data in the cluster, only meta data for cluster operations)

For those that utilize this free service, it could reduce the operational overhead for managing ELK cluster significantly as Elastic provides best practice AI driven monitoring framework of your cluster, it also provides you recommendations for mitigations.

A screen shot from the Elastic AutoOps intro.

Well, we all know from our life experiences that "free" product and services if often not so "free" in the other aspects. This is a service that costs computing power and maintenance, but I guess this provides Elastic as a product vendor the critical insight of how customers are using their products and key insights of what is right and what is wrong with the product implementation out in the field. Of course this insight is worth a lot for product development and commercial reasons.

For users and administrators of ELK cluster, the pros and cons are obvious:

Pros:

No need to reinvent the wheel and build up up-to-date routines, tooling setups for monitoring of the cluster when the best-in-class tool is free to use

Reduce lead time for troubleshooting significantly with the support of AI engines online at Elastic Cloud

No longer rely on key person or competence to manage the cluster operation on a day-to-day basis

Developers, SRE and IT security analysts that are heavy users of the ELK stack will be able to have a real time view of how the cluster is working in real-time if they hit any performance issue or need to troubleshoot

Cons:

You need to submit the cluster metadata to Elastic Cloud through the AutoOps agent

Monitoring of the cluster becomes more of a black-box and you just consume the data (it may not be a con as end-users are more interested of the outcome from Elastic solutions than the cluster itself)

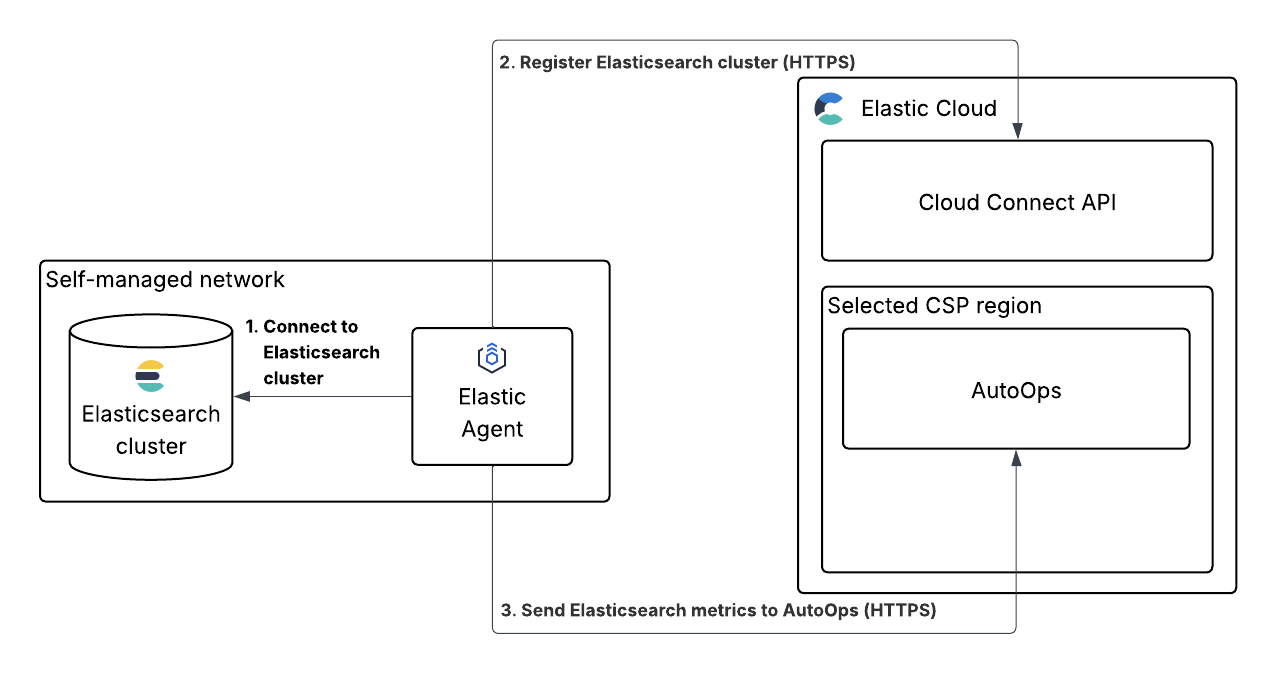

How does this AutoOps agent work? (source Elastic doc)

Impact of this for organizations and teams using Elastic stack for search, observability and security:

You probably no longer need as many ELK cluster operational resources as ealier when this monitoring was sole an in-house action

Users of Elastic stack will have transparency of how the cluster is working right now, which significantly reduce their troubleshooting time when they hit issues

It becomes easier to further develop and expand the cluster as shortcomings of the current environment becomes much clear through the insightful data in AutoOps

The question we need to ask is what we will do when this feature will be charged, the cost of savings in reduced number of human monitoring resources may justify a price tag

Before the cloud age, vendors would probably sell a tool or function like AutoOps as an additional feature with license fee. Now Elastic chooses to provide this as a free service in the Cloud. For smaller organizations, it is a no brainer and many probably is already running on serverless. For others this provides an opportunity to move the cost from maintenance resources to more AI driven automation and operation in future. This is happening anyway with the rapid expansion of Agentic AI.

Obserability in the Agentic AI era

Langchain published on Feb 21:st a very insightful and structured paper about Agent observability in the Agentic AI era which is taking the industry with storm.

https://blog.langchain.com/agent-observability-powers-agent-evaluation/

If we think it is a challenge migrating monitoring to observability for the microservices and kubernetes containers, degree of difficulties and challenge grow hundred times in the Agentic AI due to the following changed behavior of software:

Testing and verification appears only at run-time, traditional tests are obsolete

Number of code lines to trace and debug grow to astronomical level

Indeterministic nature of the LLM reasoning outcome

The interaction model between the AI agents

Langchain published on Feb 21:st a very insightful and structured paper about Agent observability in the Agentic AI era which is taking the industry with storm.

https://blog.langchain.com/agent-observability-powers-agent-evaluation/

Here are some short summaries of the content:

New challenges compare with traditional software debugging

From debugging code to debugging reasoning

Change of testing methodology for software when agent behavior emerges only at runtime

Major observability components like runs, traces and threads in agent calls

Growth of tracing data will be gigantic for debugging purposes

Mitigations:

Single-step evaluation

Full-turn evaluation

Multi-turn evaluation

Other evaluation concepts

Offline evaluation

Online evaluation

Ad-hoc evaluation

An example of troubleshooting workflow for Agents:

User reports incorrect behavior

Find the production trace

Extract the state at the failure point

Create a test case from that exact state

Fix and validate

On the blog page there are as well a number of case studies of using Langsmith for Agent Observability troubleshooting. As this is so new and fresh, most of the tooling vendors are yet catching up frenetically in this area.

https://blog.langchain.com/tag/case-studies/

I asked Claude to provide me with a summary of the AI agent observability field, and below are the summary table that Claude has provided based on the evaluation concepts that Langchain provided in the blogpost.

My take away:

If we think it is a challenge migrating monitoring to observability for the microservices and kubernetes containers, degree of difficulties and challenge grow hundred times in the Agentic AI due to the following changed behavior of software:

Testing and verification appears only at run-time, traditional tests are obsolete

Number of code lines to trace and debug grow to astronomical level

Non-deterministic nature of the LLM reasoning outcome

The interaction model between the AI agents

We are at the dawn of a new era with a lot of doors of opportunity open for innovation and new technology. Thanks to the fact that we have smarter AI LLM and tools now, those observability challenges with huge datasets and iterative testing cycles is just what AI is good at.

Vendor Capability Comparison for Agent Observability

Summarized by Claude

The three IT observability incumbents (Dynatrace, Elastic, Splunk/Cisco) are all moving fast, but their approaches reflect their heritage.

The notable difference vs. purpose-built tools like LangSmith and Arize: the incumbents excel at correlating agent behavior with the full application/infrastructure stack, but LangSmith remains the only platform where Runs, Traces, and Threads are truly first-class primitives — particularly for building evaluation datasets directly from production traces, which is the most critical workflow the blog post describes.

Agent Observability: Vendor Capability Comparison

Mapping IT observability vendor solutions to the LangChain framework for agent observability — Runs · Traces · Threads · Evaluation

| Observability Area (LangChain Framework) |

🔵 Dynatrace Grail + Davis AI + DT Intelligence |

🟡 Elastic Elastic Observability + EDOT |

🟠 Splunk / Cisco Observability Cloud + AppDynamics |

🟣 Datadog LLM Observability |

🟢 New Relic AI Monitoring |

🔴 LangSmith (LangChain) — Purpose-built |

⚪ Arize AI Phoenix + AX |

|---|---|---|---|---|---|---|---|

| PRIMITIVE 1: RUNS — Capturing individual LLM execution steps (inputs, outputs, tool choices at each step) | |||||||

| Single LLM Call Tracing Input/output capture per call |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Tool Call Visibility Which tools the agent invoked, with what arguments |

GA

|

GA

|

GA

|

GA

|

Preview

|

GA

|

GA

|

| Cost & Token Monitoring Token usage, cost-per-request tracking |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| PRIMITIVE 2: TRACES — Capturing full agent execution trajectories (all steps, tool calls, nested structure) | |||||||

| End-to-End Agent Trace Multi-step trajectory from input to final output |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Topology & Dependency Mapping How agents, tools, and services relate to each other |

GA

|

GA

|

GA

|

GA

|

Preview

|

GA

|

GA

|

| RAG / Retrieval Observability Vector DB, retrieval quality, context grounding |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Guardrails & Safety Monitoring Content filtering, prompt injection, policy compliance |

GA

|

GA

|

GA

|

GA

|

Preview

|

GA

|

GA

|

| PRIMITIVE 3: THREADS — Multi-turn conversation context across sessions (state evolution, context accumulation) | |||||||

| Multi-Turn Session Tracking Grouping traces into conversational threads |

GA

|

GA

|

Preview (Alpha)

|

GA

|

Preview

|

GA

|

GA

|

| State & Memory Tracking How agent memory and artifacts change across turns |

GA

|

Preview

|

Preview

|

Preview

|

Roadmap

|

GA

|

GA

|

| EVALUATION — Assessing agent quality: single-step, full-turn, multi-turn; offline, online, and ad-hoc | |||||||

| Single-Step Evaluation Did the agent make the right decision at a specific step? |

GA

|

GA

|

Preview

|

GA

|

Preview

|

GA

|

GA

|

| Full-Turn (Trajectory) Evaluation Did the agent execute the full task correctly end-to-end? |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Multi-Turn Evaluation Does the agent maintain context correctly over a full session? |

Preview

|

Preview

|

Preview (Alpha)

|

Preview

|

Roadmap

|

GA

|

GA

|

| Online (Production) Evaluation Continuous quality checks on live agent traffic |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Offline Evaluation / Datasets Building test suites from production traces; pre-deployment testing |

GA

|

Preview

|

Preview (Alpha)

|

GA

|

Roadmap

|

GA

|

GA

|

| Ad-Hoc Insights / AI-Assisted Analysis Querying traces at scale; pattern discovery; LLM-as-judge |

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| PLATFORM DIFFERENTIATORS — OTel alignment, framework support, unique strengths | |||||||

| OpenTelemetry & Framework Support | GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

GA

|

| Key Differentiator / Unique Strength | 🔵 Causal AI + Deterministic Agents: Davis AI provides causal root cause analysis grounded in real-time Smartscape topology. Dynatrace Intelligence fuses deterministic + agentic AI for trusted autonomous operations. 12x better problem resolution vs. pure LLM agents. | 🟡 Search + Observability + Security Unified: Elastic combines LLM observability, security (SIEM), and search in one platform. Strong OTel ecosystem via EDOT. Leader in 2025 Gartner Magic Quadrant for Observability Platforms. | 🟠 Cisco AI Defense + AGNTCY Standards: Unique network/security heritage via Cisco integration enables AI risk detection at infrastructure level. Strong OpenTelemetry contribution and vendor-neutral AGNTCY standard for agent quality metrics. | 🟣 Breadth + APM Correlation: LLM traces integrated directly alongside existing APM, infra, and security data. LLM Experiments allows prompt testing pre-deployment. Watchdog AI for continuous anomaly detection. Google ADK first-mover integration. | 🟢 Application-Centric Depth + Pricing: Strong APM heritage with code-level diagnostics. Predictable data-ingestion pricing. SRE Agent integrates with ServiceNow, PagerDuty, GitHub for agentic remediation. 30% QoQ growth in AI Monitoring adoption. | 🔴 Purpose-Built for Agent Evaluation: Only vendor where Runs, Traces, and Threads are first-class primitives. Production traces automatically become offline test datasets. Deepest LangChain/LangGraph integration. Insights Agent for AI-assisted trace analysis at scale. | ⚪ ML Pedigree + Open Source: Only vendor with traditional ML model monitoring (drift, bias) converging with LLM agent observability. Arize Phoenix is open-source and OTel-native. Strong RAG evaluation with TruLens. Best embedding-level drift detection. |

Sources: LangChain Blog (Feb 2026), Dynatrace Docs & Blog (Jan–Feb 2026), Elastic Docs & Observability Labs (2025–2026), Splunk Blog & Docs (Q1 2026), Datadog, New Relic, Arize AI product documentation. Status as of February 2026. Features evolving rapidly — verify current availability with vendors.

Data summarized by Claude on Feb 26,2025

Disclaimer: AI can make mistakes, for deep dive please doublecheck the answers on relevant sources.